Sound Interfaces and Communication Featured Signal of Change: SoC1079 April 2019

Ubiquitous Interfaces, Constant Interactions (Signal of Change, February 2019) looks at the goal of achieving true ubiquity of interfaces, which gives users a constant connection to the digital world so they can extract information or interact with apps. In particular, speech-recognition technology has moved a lot closer toward providing users with seamless interaction with devices since 2003's Business Implications of New Directions in Speech Technology. Humans and Machines Converse from Signal of Change, December 2018 maintains that the ability to use natural language to engage with applications will spread to virtually every human interaction with digital and electronic systems. Several technologies could advance speech- and sound-driven interaction with devices and the environment. Scan™ mentioned some related conceptual approaches more than a decade ago. For instance, 2004's, Advances in Speech Technologies: Pointing the Way to New Applications highlights speechless speech recognition. The timing seems right to take a look at advanced speech- and sound-related interface technologies, which could drive a wide range of applications beyond smart speakers.

Researchers are working on enablers for solutions that could eventually enable the surreptitious transmission of sound.

Various approaches offer potential ways to facilitate the quiet and surreptitious speech control of devices. For example, researchers at the Massachusetts Institute of Technology (MIT; Cambridge, Massachusetts) are looking at the use of subvocal interfaces. Such interfaces make use of neuromuscular signals that occur when users verbalize commands internally. In simple terms, the technology makes use of physiological processes that occur when people talk to themselves in their minds. A subvocal interface can capture such information via electrodes on the user's jaw and face. The MIT researchers' device, which enables users to ask questions and control devices without speaking, also features bone-conduction headphones to enable the transmission of information to users without disrupting their hearing other tasks they are engaging in. The device looks very obtrusive, covering the lower part of one side of the user's face. Likely, such a device will find use in only very focused application areas—potentially in loud industrial or commercial environments where the speechless capture of commands could provide many benefits. Meanwhile, Microsoft (Redmond, Washington) patented a technology that would enable a person to use whispers to interact with speech-enabled devices, thereby preventing the person from disturbing other people in the area. Problematically, the technology, which requires positioning a microphone extremely close to the user's mouth, calls for the user to inhale while whispering to prevent distorting the input with exhaled airflow. Even if the technology were reliable, few people are likely to expend the effort necessary to train themselves to inhale while whispering.

Other approaches could also provide improvements in speech recognition and even lead to solutions that could enable the extraction of speech-related information from a distance. Researchers at Xi'an Jiaotong-Liverpool University (Suzhou, China)—an international joint university by the University of Liverpool (Liverpool, England) and Xi'an Jiaotong University (Xi'an, China)—are working on a technology that will improve hearing aids but could also support advanced speech-technology systems. The researchers are looking at a way to use visual information to augment the information that sound provides. To that end, a system the researchers are developing would use a camera to capture and track speakers' lip movement, providing information that could lead to a more robust speech-processing experience for wearers of hearing aids—particularly in loud situations. According to Andrew Abel from Xi'an Jiaotong-Liverpool University, "The idea is that an audio signal from a microphone and visual information from a camera can be fed into the same system which then processes the information, using the visual information to filter the 'noise' from the audio signal" ("Research: Developing the Cognitive Hearing Aid," Xi'an Jiaotong-Liverpool University, 4 December 2018; online). The researchers drew inspiration for their system from the cocktail-party effect, in which a person focuses on a conversation partner's speech and tunes out surrounding distracting noise. Such a system could not only help speech-recognition technologies in general but also offer the potential to extract information from video footage that has no sound, for instance. Another technology that could capture sound and improve the ability to extract information for a wide range of applications comes from Nokia Corporation (Espoo, Finland). Developers from Nokia created OZO Sound—software that enables smartphone users to zoom in on sound information while recording video much like the way smartphone users can zoom in on visual information while recording video or taking photographs. The software enables users to focus selectively on various points in a scene to "capture sound coming from in front (or even behind) the cellphone's cameras and microphones. What's more, the software allows the audio recording to automatically track the selected person, animal, or object. It also allows users to zoom in on a particular sound...and sync the audio zoom with the video zoom" ("Zoom and Focus: These Familiar Video Features Are Coming to Audio," IEEE Spectrum, 15 January 2019; online). For instance, a user could zoom in on his or her children at a playground and then hear what the children are saying to friends. The software even reduces surrounding noise. Technical complications prevent OZO Sound's rolling out as a downloadable app and require smartphone manufacturers to license the software for integration into their phones' built-in audio- and video-capturing software. These conditions create an adoption hurdle for OZO Sound.

Similarly, developers are looking at ways to transmit sound and information in surreptitious ways. For example, researchers at the University of California, Berkeley (Berkeley, California), demonstrated that they could hide speech-based commands for virtual assistants in recordings of music or spoken text. Although these commands are nearly inaudible to the human ear, they can make a virtual assistant interact with smartphones and some other connected devices. Meanwhile, researchers at the MIT Lincoln Laboratory are developing ways to use a laser to send an audible message directly to a person's ear across a distance of several feet. The laser-based approaches do not require the use of any receiver equipment, and they enable the targeted transmission of sound across noisy rooms. Conceivably, such methods could also find use in transmitting commands and information to speech-enabled systems in industrial facilities and other noisy environments. Other researchers are working on enablers for solutions that could eventually enable the surreptitious transmission of sound. Scientists from the City University of New York (New York, New York) developed a metamaterial capable of transmitting sound along its edges. The researchers believe that the material's capability to provide abnormally robust sound transmission gives the material multiple application areas, including ultrasound imaging and underwater acoustics.

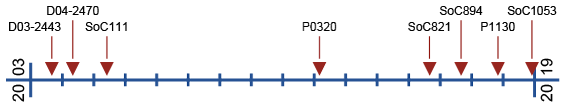

The Development of this Signal of Change

Data Points

- SC-2019-03-06-040

Researchers at Xi'an Jiaotong-Liverpool University (Suzhou, China) are working on a technology that will improve hearing aids. The researchers are looking at a way to use visual information to augment the information that sound provides. - SC-2019-03-06-012

Developers from Nokia created OZO Sound—software that enables smartphone users to zoom in on sound information while recording video much like the way smartphone users can zoom in on visual information while recording video or taking photographs. - SC-2019-05-01-057

Researchers at the MIT Lincoln Laboratory are developing ways to use a laser to send an audible message directly to a person's ear across a distance of several feet.

Implications

Sound Interfaces and Communication

Advanced speech- and sound-related interface technologies could drive a wide range of applications.

Previous Alerts

- D03-2443 — Business Implications of New Directions in Speech Technology (September 2003)

Speech technology is moving from interactive to proactive applications, from enterprise to small-office/home-office applications, from "hard" speech recognition to "soft" speech recognition, and from well-defined markets to widespread penetration. - D04-2470 — Advances in Speech Technologies: Pointing the Way to New Applications (May 2004)

With increasing integration with other technologies, speech technologies will improve access to information and offer new efficiencies, opening a number of business opportunities. - SoC111 — The Art and Science of Sound (June 2005)

Experts in acoustics are starting to bring the same innovative spirit that has driven graphics and visual applications to the world of sound. Sonification (the sonic equivalent of visualization) is just the beginning. - P0320 — Seeing Sound, Hearing Touch (March 2012)

The use and transformation of sound will open manifold applications for data analysis, interface technologies, and even material science. - SoC821 — Sound and Speech in Advanced HMIs (September 2015)

Sound is data, soundscapes are minable data streams, and speech is becoming a multifaceted input. - SoC894 — Conversation as a Platform (September 2016)

Emerging technologies that enable conversational interactions with systems and applications could change the way that users interact with and operate applications. - P1130 — Speech Technologies' Landscape (November 2017)

As voice assistants and speech interfaces become more common, new applications and unintended consequences will emerge. - SoC1053 — Humans and Machines Converse (December 2018)

The ability to use natural language to engage with applications will spread to virtually every human interaction with digital and electronic systems.